GSA SER Verified Lists Vs Scraping

Why the Source of Your Targets Matters

Every backlink campaign built with GSA Search Engine Ranker depends on one critical factor: the quality of your target URLs. Two dominant approaches exist for feeding the software—using pre-curated verified lists or relying on built-in scraping engines. Each method shapes your success rate, domain diversity, and overall link profile risk. Understanding the trade-offs between GSA SER verified lists vs scraping helps you choose the right strategy without wasting time or resources.

What Exactly Are GSA SER Verified Lists?

Verified lists are collections of URLs that have already been tested and confirmed as working by other GSA SER users. Typically sold or shared in niche communities, they claim to contain platforms where the software can successfully register and post. They come in formats like .txt or .csv and are simply imported into the “Target URL†field.

Common varieties include:

- General verified lists (mixed platform types)

- Engine-specific lists (e.g., only WordPress or Joomla targets)

- Country-targeted verified lists

- “Premium†monthly subscription packs

The Pros of Using Verified Lists

- Instant momentum: Drop the file in and the software starts posting immediately, bypassing the slow initial search phase.

- No scraping overhead: Your proxies and bandwidth go directly to submission, not hunting for targets.

- Predictable volume: You know exactly how many potential URLs you’re working with from the start.

- Useful for testing: They let you validate your entire setup—captcha solvers, email accounts, and threads—before diving into deeper scraping runs.

The Drawbacks You Can’t Ignore

- Rapid decay: A verified list ages the moment it’s compiled. Platforms delete spam, change login routines, or go offline, shrinking the usable portion daily.

- Massive footprint overlap: Thousands of other marketers are using the same list. Your backlinks end up on the exact same domains, creating a painfully obvious link pattern.

- Zero diversity: You’re limited to what someone else found. Untapped platforms, niche-specific engines, and newly indexed sites remain invisible.

- Irrelevant placement: Lists rarely align with your niche. A general verified list might post your fitness article to a forum about car repairs, harming relevance signals.

How the Scraping Approach Works in GSA SER

Scraping means letting GSA SER build its own target list on the fly using configured search engines. The software takes your keywords, sends them to platforms like Google, Bing, Yandex, or hundreds of smaller footprint-based scrapers, and harvests the resulting URLs. It then analyzes each page for supported platform footprints before attempting to register and post.

This isn’t a one-click process. It requires a well-tuned engine selection, reliable proxies, and thoughtful keyword rotation to generate a steady flow of fresh, contextual targets.

Why Scraping Often Outperforms Static Lists

- Freshness guarantee: Targets are discovered in real time. You’re not relying on days-old verification statuses—you engage sites that are currently active.

- Natural footprint diversity: Because every scraping session yields a different mix of domains, your link profile grows across a broad, scattered set of IPs and nameservers.

- Topical proximity: You control the keywords. Good keyword lists place your links on pages where the surrounding content matches your niche, boosting relevance.

- Exclusive targets: Many sites found through scraping haven’t been hammered by every person who bought a verified pack. This lowers the immediate spam-trigger rate.

The Hidden Costs of Scraping

- Proxy consumption: Search engines block aggressive scrapers rapidly. You’ll burn through a larger volume of proxies, especially if targeting strict engines like Google.

- Longer ramp-up: You won’t see high submission rates in the first few minutes. The software must search, harvest, test, and then post.

- Hardware and bandwidth demand: Scraping hundreds of URLs simultaneously can strain a VPS, requiring more cores, RAM, and a fast connection to avoid bottlenecks.

- Learning curve: You need to manage search engine lists, retry limits, OCR settings, and the site lists that store harvested links properly.

GSA SER Verified Lists vs Scraping: A Direct Comparison

Both methods can be used separately or together, but their core philosophies differ. The following breakdown highlights when one edge outweighs the other.

Speed of Deployment

- Verified lists: Immediate. Import, click start, and verified platforms begin accepting posts within seconds.

- Scraping: Delayed. The initial search-and-identify cycle can take minutes to hours depending on engine limits and proxy quality.

Link Footprint Quality

- Verified lists: High footprint overlap. Identical domains appear across countless link profiles, making it easy for search engines to devalue them.

- Scraping: Low overlap. Your harvested domains form a unique set, blending more naturally into a tiered link building structure.

List Longevity

- Verified lists: Short shelf life. A list purchased today might be half-dead in a week. Continuous re-verification is necessary, or you’re hitting dead ends.

- Scraping: Self-renewing. As long as your proxies, keywords, and engines are working, new targets flow in without manual intervention.

Relevance Control

- Verified lists: Minimal. You can’t dictate where the links land; the list is agnostic to your content.

- Scraping: High. By rotating niche keywords, you guide the software toward pages with matching terms, boosting anchor text context.

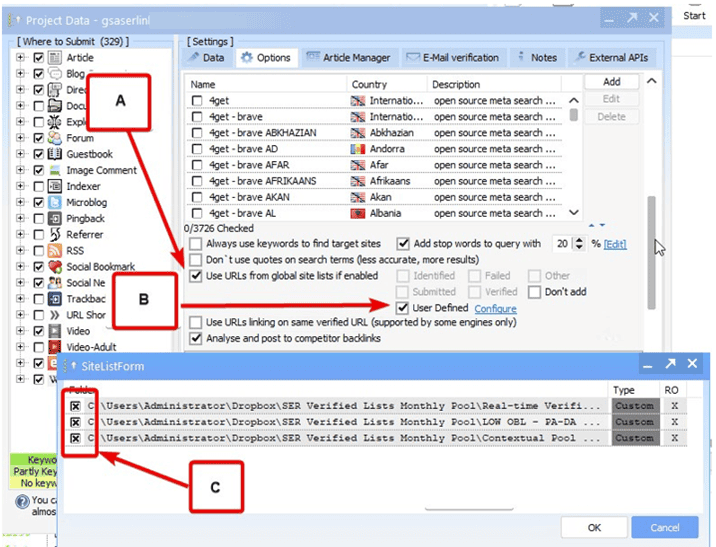

here

Cost Profile

- Verified lists: One-time or subscription cost, plus very light proxy usage. Ideal for budget-conscious test runs.

- Scraping: Ongoing proxy expense, higher server requirements, and more captcha-solving credits to harvest at scale.

When to Use Each Method Effectively

It’s rarely an either/or decision. Experienced users often blend both to maximize output while controlling quality. Here’s a practical guide:

Scenarios Favoring Verified Lists

- Initial setup verification: Before tweaking complex scraping settings, run a small verified list to confirm captcha services, email accounts, and posting engines work.

- Burst link needs: When building quick tiers for lower-level properties where diversity matters less, verified lists provide bulk links instantly.

- Engine-specific testing: If you need to test a new custom engine script, a verified list of that exact platform type gives you a clean control group.

- Proxy-poor environments: On a VPS with limited proxy resources, a verified list lets you post without triggering search engine bans.

Scenarios Where Scraping Dominates

- Tier 1 and Tier 2 link building: Contextual, diverse targets found through scraping create more resilient buffer layers.

- Niche penetration: You want links on pages that discuss your topic. Scraping with long-tail keywords discovers those hidden pockets.

- Long-term campaigns: A single well-configured scraping campaign can run for months, constantly replenishing its target pool with zero manual list updates.

- Footprint diversification: To avoid patterns, scraping across multiple country engines, image search, and meta-scrapers builds a complex, natural-looking backlink graph.

Building a Hybrid Strategy

Think of verified lists as a starter culture and scraping as the self-sustaining farm. One approach that works well:

- Import a small, recent verified list to prime the software and generate immediate submissions while the scrapers warm up.

- Enable a curated set of 30–50 scrapers — prioritize less-aggressive engines (Rambler, Yep, Mojeek) to keep proxy bans low.

- Feed the scraper keywords that match your money page topic and rotate them daily.

- Let the campaign run. Verified links fill the early gap, then scraping takes over as the primary source of fresh URLs.

- Enable the “automatically remove verified links after X days†option if available, or periodically clear the verified URL list to prevent re-posting to dead domains.

Frequently Asked Questions

Can a verified list be scraped to make it larger?

Not directly. A verified list is a static file. You could theoretically use its URLs as a seed to find similar sites through crawling tools, but GSA SER’s scrapers use keywords and footprints, not URL-to-URL discovery. However, analyzing successful domains from a verified list can help you craft better footprint strings and keywords for your scraping campaigns.

Why do my verified lists stop producing links so quickly?

Verified lists are a snapshot of a moving target. The moment they’re created, site administrators delete spam accounts, security plugins update, and hosting companies suspend abusive domains. Without constant re-verification by the seller, the list decays exponentially. Additionally, heavy shared usage accelerates bans because the same IP ranges hit the same sites repetitively.

Do I need different proxies for scraping and posting?

Yes. A common mistake is using the same proxies for both. Scraping burns through search engine bans fast, and you don’t want your posting proxies blacklisted before you even submit. Ideally, use dedicated scraping proxies (rotating datacenter or residential backconnects) and a separate pool for posting to the targets. This keeps your submission IPs clean for longer.

Which scraping engines should I enable to mimic a verified list’s volume?

Prioritize meta-scrapers and less-known engines. Enable “Google (via scraper),†“Bing (old API),†“Yandex,†“YouTube (for comment footprints),†and the many platform-specific scrapers like “WordPress - Search†or “Joomla - Search.†Avoid relying solely on Google; it caps queries aggressively. Mixing 40–60 engines often yields a steady flow without tripping rate limits.

How do I verify a purchased verified list is genuine?

First, scan the file with GSA SER’s built-in filter tool to remove duplicates, non-HTTP links, and improper formats. Then, import only 10% of the list into a test campaign with tight logging enabled. Check if the software successfully creates accounts or posts. Genuine lists still possess a sizeable working fraction; if fewer than 5% result in an active submission, the list is stale or scraped from public pastebins without actual verification.

Is it possible to build a completely unique target database without scraping?

Not sustainably. You can manually gather URLs, use footprints in search operators, or harvest from site lists, but those methods are essentially manual scraping. Automated scraping remains the only way to generate large, unique target pools continuously without human intervention. Relying solely on verified lists always reintroduces shared footprint risk.

Making the Final Choice

The decision between GSA SER verified lists vs scraping shouldn’t be framed as loyalty to one method. Let campaign goals dictate the mix. For fast, low-risk testing or bulk low-tier blasts, a trustworthy verified list saves time. For any layer that passes equity to a money site, scraping provides the diversity and contextual wrapping that modern algorithms expect. Monitor your success rates, rotate your approach, and never let your entire campaign depend on a single source of targets.